ACA risk adjustment management: Higher EDGE-ucation

“ACA risk adjustment management: Going all-out” is the first article in the risk adjustment management series and should be read before the remaining three articles.

The beginning of a school year fills millions of students with anticipation as they face the prospects of new challenges and experiences. The beginning of a coverage year under the Patient Protection and Affordable Care Act (ACA) provides similar anticipation for health plans facing new challenges and experiences but with the added prospect of significant financial risk. Two drivers of this risk and uncertainty are risk adjustment transfers and the External Data Gathering Environment (EDGE) server protocols that support the program. Much like an end-of year assignment, issuers populate the servers with information used by the Centers for Medicare and Medicaid Services (CMS) for annual risk transfer calculations. While students worry about poor grades, ACA issuers have a lot more at stake, with real money on the line if performance falls on the wrong side of the industry bell curve.

EDGE server management is technically demanding and resource-intensive. As with anything new, a learning curve was expected at first. However, with the third ACA plan year officially in the books, issuers are still looking for a better, faster, and more efficient solution to ease the burden and streamline the process.

EDGE Server 101

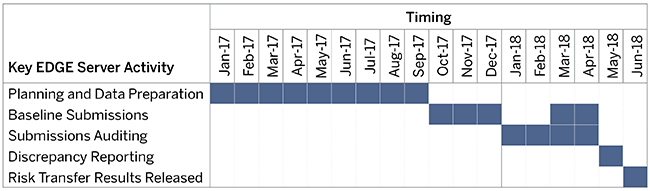

Every issuer with ACA-eligible members is expected to submit benefit year claim and enrollment data to the EDGE server on a predefined schedule, beginning in the fall and culminating near the end of April the following year. Data residing on the EDGE server at the close of the cycle will directly tie to the final risk transfer payment.1 The timeline in Figure 1 illustrates a simplified 2017 EDGE server cycle.

Figure 1: Simplified EDGE Server Timeline for Benefit Year 2017

Issuers host the EDGE server either on-premise or through the cloud via Amazon Web Services (AWS).2 Issuers may outsource process management—including hosting, submission, and maintenance—to a third party focusing on data collection and related technologies.

No “A” for effort

Issuers must submit accurate and timely information to the EDGE server to be eligible for the risk adjustment program and to optimize their risk transfer amounts. Millions of dollars in lost revenue have occurred over the past few years due to inadequate EDGE server oversight and submission errors. In other words, there is no “A” for effort.

CMS has a stake in ensuring the quality of data residing on the EDGE server. Annually, CMS assigns a risk adjustment default charge (RADC) to issuers submitting insufficient data to the EDGE server, to issuers failing to establish an EDGE server, or to issuers with low membership electing to receive the RADC.3 In addition, CMS has the ability to assess penalties for those out of compliance.4 CMS is also planning to use EDGE server data to recalibrate future risk adjustment models,5 which will likely increase the level of data scrutiny in future years.

What’s the lesson plan?

Below, we highlight the core areas of focus in EDGE server management—the people, the process, and the technology—to help you make the grade this year.

The people

The first step is establishing a clear, dedicated owner in charge of the entire EDGE server submission process. This person should not also lead the organization’s overall risk adjustment management program, given the substantial amount of focus needed for the many details of the annual EDGE server cycle. Further, the role needs to reside within the company even if the EDGE server itself is hosted by an external vendor.

A successful EDGE server owner should have a strong partnership with the team submitting data—whether an internal information technology (IT) department or a third-party administrator (TPA). This person should also regularly attend Registration for Technical Assistance Portal (REGTAP) meetings, communicate new technical guidance where appropriate, and report progress and issues back to risk adjustment stakeholders.

While the EDGE server is a piece of technology, it requires more than just the IT department. In our introductory paper in this series,6 we stressed the importance of the overall risk adjustment leader acting as the liaison to all other areas supporting the flow of data into the EDGE server. These areas are integrally involved at various stages in the timeline and include compliance, legal, actuarial, health management medical coders, and provider management. If an issuer outsources the EDGE server submission process, there is an additional layer of people and processes requiring oversight. Issuers should manage a vendor similarly to an internal functional team—arguably more closely given the lack of direct control. Too many issuers simply hand off the entire EDGE server submission process to a TPA, assuming the work will be timely, consistent with CMS guidance, and inclusive of reasonable quality controls and auditing. All too often these same issuers have experienced delays, miscommunications, lack of transparency, and, worst of all, errors leading to lost risk transfer revenue.

Another “team” vital to the EDGE server process, but rarely mentioned, is CMS. Acknowledging CMS as a team member is typically an afterthought. However, CMS is front and center at every point, offering technical assistance, guidance, documented resources, webinars, and EDGE server software maintenance. Navigating the waters of CMS guidance and documentation can be challenging and, at times, frustrating. However, issuers should view CMS as their partner in the process and reach out as often as necessary to obtain clarification or to assist in troubleshooting.

The process

Plan early—don’t pull consecutive all-nighters and cram the weekend before. Data submissions are tedious and challenging, but the test can be aced with a little foresight and proper planning. Remember, “all roads lead to EDGE,” and the server contains a collection of information needing to be gathered, validated, and eventually uploaded. Data originating in source systems may be altered several times along the way (by more than one department) before it reaches the server. Any program or process affecting these data elements should be coordinated so all the distinct pieces are readily available when required and are consistent with each other. Issuers need to carefully track data from start to finish. Therefore, tying back to source systems is the first and last step in quality control. Process, audit, submit, audit, correct and resubmit, audit. Notice a pattern? This is the (not so) magical formula for each and every EDGE server submission.7

EDGE server management involves engagement from every stakeholder throughout the timeline. Communicating back to each one regularly keeps everyone on the same page and fosters teamwork and collaboration, especially when deadlines loom.

Commissioning and maintaining the server should be the focus of the IT department. Just like any other piece of technology, it will require updates. CMS deploys several maintenance releases throughout the year to fix technical issues or sometimes risk adjustment model logic and calculation errors. Issuers should be on the lookout for these releases and carefully consider the impact. Updates affecting the final risk score should be disseminated to the areas involved in pricing, reporting, and forecasting. Other technical updates could affect an issuer’s infrastructure if, for instance, EDGE server file layouts change.

CMS regularly publishes an EDGE server timeline throughout the cycle and discusses key changes or milestones on REGTAP calls. Issuers should make every effort to participate in each REGTAP meeting, at a minimum delegating responsibility among team members to record notes. It is possible to stay up-to-date without attending these calls, but actively participating in or listening to the question and answer sessions can greatly enhance understanding or provide insight from unpublished guidance or other issuers struggling with common concerns.

CMS has two approaches to evaluate issuer data. The first is CMS’s quantity and quality analysis,8 which runs throughout the EDGE server cycle, culminating with a final analysis directly following the final submission deadline. Issuers can use these checkpoints as an opportunity to review the appropriateness of their data and should have reporting in place to mimic the CMS analysis. The second, retrospective approach is the Risk Adjustment Data Validation (RADV). RADV begins several months after the final deadline with an initial validation audit (IVA), and issuers must hire an independent third party to review and audit a subset of members’ claim details, including diagnosis codes accepted on the server. The audit findings from a secondary validation audit (SVA) are used to adjust future risk scores9 and ultimately risk transfers if errors exist.10 Issuers can reduce a tremendous amount of burden during RADV by maintaining complete and easy-to-follow documentation in real time. Leaving a clear paper trail means the IVA vendor will need fewer issuer resources while collecting and organizing the information. Proper documentation will also help ensure timely resolution of outstanding issues well in advance of the deadline.

The technology

The most obvious piece of technology is the EDGE server itself. Upon final provisioning, CMS establishes two environments in every server—each used for separate, but related, functions within the same submission process. The first is a test environment, which is the primary location for trial EDGE server submissions to assure accuracy and completeness of the data, e.g., proper file layouts, correct data type specifications, valid Current Procedural Terminology (CPT) and diagnosis codes. After receiving permission from CMS, issuers can remove and resubmit data at any time and as often as needed in the test environment until all internal data validations are satisfied. Think of this as a “test prep” before the exam. The second environment, the production environment, is where the final EDGE server data resides. Information stored in the production environment at the final deadline will be used for the risk transfer calculation. While CMS does allow issuers to essentially wipe and replace data here (through truncation commands), these circumstances require instance-specific permissions from CMS and should not be relied on as a business practice.

The other technology impacting the process is an issuer’s internal data warehouse and the procedures to extract, clean, and load data into the EDGE server. Data warehouses play a dual role of supplying data and reconciling EDGE outputs. Tracking the number of records in the source system all the way to the EDGE server outbound files is the first step to validate that all relevant data is being accurately captured. In addition, it is important to audit member-level detail (e.g., risk scores, member months, etc.) on the EDGE server against calculations performed directly from the internal data warehouse. Tying to a source outside the EDGE server will highlight data inconsistencies and reveal opportunities to more fully capture risk scores. It also allows for error corrections to be prioritized, which is important given the short time between full benefit year data availability and the final submission deadline. Again, workflow management should be set up at the beginning of the cycle so adequate resources are available to assist with data investigation and error resolution throughout the entire process rather than just at the end.

So did you pass?

There must be ownership for every part of the risk adjustment management program. Arguably, EDGE ownership is the most important aspect. It doesn’t matter how robust the coding completeness efforts, how well managed the population, how high the risk score—if the data on the EDGE server is inaccurate or incomplete, the final transfer payment will be adversely impacted.

Much more than good grades are on the line. Without a robust project plan, clear ownership, and an extensive series of checks and balances, issuers could miss out on a significant amount of compensation. While the rules and specifications of the EDGE server will evolve, planning and implementing with the appropriate people, processes, and technology can ensure you are at the head of the class each and every year.

1Not accounting for any impacts from Risk Adjustment Data Validation or the 2018 high-risk pool incorporated into the risk adjustment program.

2REGTAP. EDGE Server Provisioning Computer Based Training (CBT): Text-Only Version. Retrieved November 6, 2017, from https://www.regtap.info/uploads/library/DDC_Provisioning_TextOnly_5CR_032816.pdf.

3CMS (August 15, 2017). Distributed Data Collection (DDC) for Risk Adjustment (RA): 2017 Benefit Year EDGE Server Status Reporting. Health Insurance Marketplace Program Training Series. Retrieved December 10, 2017, from https://www.regtap.info/uploads/library/DDC_ESStatReporting_081517_5CR_081517.pdf.

5Federal Register (December 22, 2016). Patient Protection and Affordable Care Act; HHS Notice of Benefit and Payment Parameters for 2018; Amendments to Special Enrollment Periods and the Consumer Operated and Oriented Plan Program. Retrieved November 6, 2017, from https://www.federalregister.gov/documents/2016/12/22/2016-30433/patient-protection-and-affordable-care-act-hhs-notice-of-benefit-and-payment-parameters-for-2018.

6 http://www.milliman.com/insight/2017/ACA-risk-adjustment-management-Going-all-out/

7There are ways to make reconciliation efforts more efficient. Review the following article for additional details: https://www.milliman.com/en/insight/taking-the-edge-off-minimize-stress-while-maximizing-aca-risk-adjustment-through-edge-ser.

8CMS (January 3, 2017). Distributed Data Collection (DDC) for Reinsurance (RI) and Risk Adjustment (RA): Quantity and Quality Data Evaluation. Health Insurance Marketplace Program Training Series. Retrieved November 5, 2017, from https://www.regtap.info/uploads/library/DDC_Slides_010317_5CR_010417.pdf.

9Errors include incorrect diagnoses or demographics as well as invalid or missing medical record documentation. See https://www.regtap.info/uploads/library/ACA_HHS_OperatedRADVWhitePaper_

062213_5CR_062213.pdf.

10CMS (August 16, 2017). IVA Entity Audit Results Submission: ICD, XSD, and XML Guidance. Health Insurance Marketplace Program Training Series. Retrieved November 5, 2017, from https://www.regtap.info/uploads/library/HRADV_slides_081617_5CR_081717.pdf.

About the Author(s)

ACA risk adjustment management: Higher EDGE-ucation

External Data Gathering Environment server management is technically demanding and resource-intensive, and issuers are still looking for a better, faster, and more efficient solution to ease the burden and streamline the reporting process for risk transfer calculations.