In my last article, Regulated software – continuous delivery to the risk-averse, I closed by saying “Transformation happens by building empathy for the customers and solving their problems. It certainly doesn’t come from one group of people forcing their way of working on another.” Milliman’s work as consultants to the insurance and financial services industry provides us the opportunity for our product team to work closely with our consultants to bring new innovations to the industry. This collaboration builds the empathy required for us to have a product team of missionaries. The legendary venture capitalist (VC) John Doerr, author of Measure What Matters, argues “we need teams of missionaries, not teams of mercenaries.” This point is expanded in this article to say “Teams of missionaries are engaged, motivated, have a deep understanding of the business context, and tangible empathy for the customer.”

While we were working on a large actuarial transformation, we noticed something surprising. It was one person’s job to take changes made by each actuary and combine them into a single actuarial model. That person’s work was critical, and they did an excellent job, but it was baffling to us software engineers that it was required. I’ve been writing software long enough to remember the role of the configuration manager, the person who was responsible for bringing together the changes of the team into a single code line. Our engineering team at the time was developing the Integrate platform using Agile methods and Extreme Programming, which had no need for such a person because the tools we used, in Git (at the time GitHub), handled it all for us. So, we suggested, “why don’t you just use Git?” It turns out that one does not simply just use Git when that person is an actuary who has never used a source control solution before. It’s possible to add an Excel spreadsheet (Access database, MG-ALFA® model, etc.) into a version control solution (Subversion, Git, etc.), but it’s almost impossible to manage conflicts in concurrent changes from a team of users. We couldn’t just turn the MG-ALFA binary models into text because the user would have little clue how to relate the changes in our user experience to the underlying text file—I know this from helping designers using Microsoft Blend (a visual tool for building user interfaces) understand XAML (the code generated by the visual tool) just so they could collaborate together and merge conflicts in their user interfaces. We understood through our collaboration between our consultants and our engineers that the answer is not to force our way of working on actuaries, but rather to tailor it to the specific audience.

This actuarial transformation was all happening at the same time as another huge technology transformation—the move to software as a service (SaaS) and cloud. Microsoft was moving Office to a SaaS offering, creating Office 365 to compete with the compelling cloud-based system from Google, Google Docs. What Google did well was enabling collaboration and what Microsoft had at the time was SharePoint—a place you could put files and check them out. It was the same problem we had. We could easily share a model, but we couldn’t handle collaboration. So, we set out to do just that—enable actuaries to collaboratively build models as Google had enabled everyone to collaboratively write a document. Google could have said, “use Git and Markdown.” It did not because it understood that everyone couldn’t just use Git and Markdown.

Why is collaboration important?

Fred Brooks, in his seminal work, “The Mythical Man-Month,” made an important observation, which has become known as Brooks’s law: “adding manpower to a late software project makes it later.” He gives three reasons: it takes time for someone to ramp up, communication burden increases exponentially, and tasks are not easily divisible. During this large actuarial project, the team size was fairly static, and we had the same experienced consultants working from the start of the project to the end, but they were far from gaining the productivity of a lean software engineering team because of the communication overhead experienced by poor tools. To solve our problem, we wanted to add new and better tools to the mix, but the learning curve would have outweighed the productivity that would be gained. The next logical solution was to throw more people at the problem, but they also need to be trained and coordinated, destroying any potential gain. Brooks's law wins again! Good tools are not the answer. The agile manifesto says, “individuals and interactions over processes and tools.” But what happens when tools encumber individuals and interactions? Projects get even later.

There’s another interesting phenomenon that was impacting the actuarial team’s productivity—Conway’s Law: “Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization's communication structure.” In this case, the model structure mirrored the organizational structure, resulting in different models per team. However, due to regulatory change, it was much more efficient short-term and long-term to have a single actuarial model. This exacerbated the need for collaboration as now the collaboration had to be across business units and not just within a small tightly integrated team. Using tools that were not built to support broad collaboration meant the processes to support working in parallel stifled agility and increased the need for much more rigorous planning and management of dependencies.

These two forces are not alone. One more remains—regulatory burden. An actuarial model is more than just the actuarial calculations. Regulations now recognize the breadth of what’s in scope in producing financial results and the control burden on actuarial teams is increasing. These controls are best met with automated model execution, which includes automating the data processes that feed the model and produce financial reports. A siloed approach leads to data quality issues and high frictional cost of change due to inefficient communication structures and change management processes. But this digital transformation of the actuarial function is hard and actuarial teams are often spending a large amount of time executing and evidencing control over execution. This concept is discussed in a lot more detail in “An Emerging Risk in 2022,” where this growing complexity and lack of end-to-end integrated tools is creating operational risk. Here we see Brooks’s and Conway’s laws combine in a quite dangerous way if you care about productivity. And we do care about productivity, as becoming an actuary takes a lot of hard work and time. A recent study from Oliver Wyman entitled “Actuary Versus Data Science” shows that entry-level actuarial exam takers are down from a peak of 18,296 in 2013 to a low of 8,868 in 2020. It would be foolish, and a waste of scarce talent, to use talented actuaries to handle this process overhead. Software engineering teams are faced with similar challenges and have developed automated approaches, such as automated testing, continuous integration, continuous delivery, and infrastructure and policy as code, to overcome process friction and build controls into the process. This is thanks to the efforts in moving lean manufacturing concepts into the software industry, the movement started with the book The Goal: A Process of Ongoing Improvement, which underpins much of Gene Kim’s work in The Phoenix Project: A Novel About IT, DevOps, and Helping Your Business Win.

How can tools help collaboration?

Tools alone cannot overcome the challenges described above. To create meaningful improvements to productivity, organizational structures must change. My colleague, Van Beach, has spoken about this in his work on the case for the chief modeling officer. But as Van points out, technology can be an enabler of a new operating model. We can learn from other industries that have met and overcome similar challenges to scaling despite the challenges above. Git and later GitHub have been a huge enabler of collaboration—open-source software fuels the innovation we’re seeing in the world. It would have happened without these tools, but it couldn’t have happened as fast. What about Git and GitHub helped so much, and how is that helping our actuarial teams?

Agile software teams break work down into features and then divide those features among engineers. This division of labor is based on a functional decomposition of work, where the work aligns to business value defined by the feature. Ideally each feature can be verified and released independently so value can be incrementally delivered to market. Although the features are independent units of work, they combine into a single product. Software teams use application lifecycle management (ALM) tools to manage this process, and typically these tools provide capabilities to manage the requirements, source code, and deployments.

An actuarial team can benefit from the same decomposition of work and division of labor, and even the same tools. To support this, we added functionality into Integrate to manage concurrent branches of work so that modeling teams could decompose changes into features and work on them independently. We developed functionality for users to merge their modeling changes together and resolve the conflicts that occur when users make overlapping changes concurrently. This allows actuarial teams to adopt the collaboration methodology used successfully for years by software engineers by improving their existing tools while respecting the burden of learning entirely new bodies of knowledge.

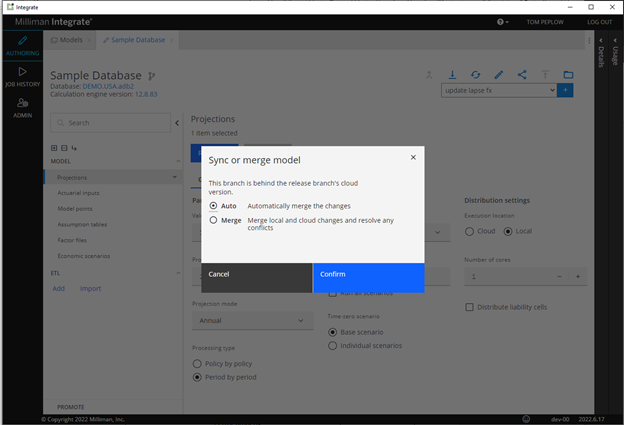

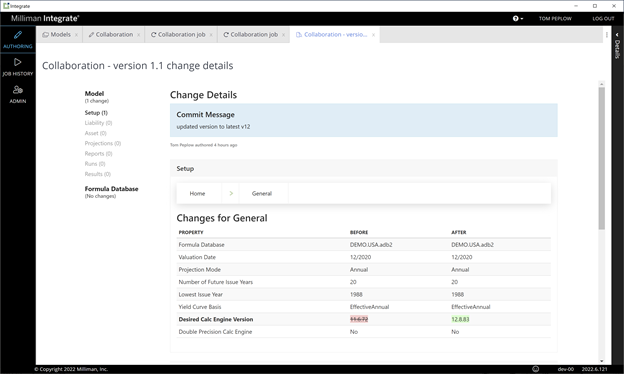

Figure 1 depicts how one person’s change is merged with another person’s, with the system able to automatically combine the changes. Figure 2 shows the audit report generated after any change is made to the model. These two approaches combine to bring collaboration and auditability seamlessly into the day-to-day workflow of actuarial modelers.

Figure 1: Synchronizing changes

The broader impact of improved tools

A large part of collaboratively building a solution (software product, or actuarial model) is long-term ownership by a team. One of the more insightful definitions of software development is from Alistair Cockburn, where he describes software development as a “corporative game of invention and communication.”1 In his work he talks about creating “sufficient residue” so that others can join the game or maintain the solution in the future. One of the lowest friction forms of this residue is the team’s collective memory, and the communication channel has high bandwidth and low latency. Open-source projects often have a team of maintainers, who are responsible for approving the communities’ contributions to the projects. To manage this process, GitHub developed a process called a” pull request,” where the contributors collaborate with the maintainers to get their work incorporated into the project. The maintainers are responsible for maintaining the conceptual integrity of the solution. They need to assess and understand whether a change is a good change or not, the risks, mitigations, etc. This low friction invention and communication channel is immensely valuable for distributing development. Pull requests leave additional residue for informing future decisions and cultivate new maintainers so the community flourishes and the project survives even as key people leave.

One of the things you get for free from this is auditability—each change is retained and available as a log review, including the commentary because the changes are iteratively improved to be approved. With a requirements management tool layered on top you have traceability of code changes to requirements (or bugs), and with test management tooling you have demonstrable quality controls. This transparency provides the governance required to demonstrate many of the controls required by regulators, with very little overhead to produce audit reports during an audit.

These controls are there to demonstrate that best practices are being followed. There can be a lot of time, and frustration, from actuarial teams in working with auditors around change management, which is not the case for engineering teams because the tools used to get the job done support the change process and provide the evidence with no additional overheads.

Figure 2: Audit report of a change

Controlled agility

I’ll close by saying that this process is a means to deliver value to market. For actuaries, models are used to help insurance companies understand the risks they are exposed to and to put in place mitigations to those risks. As discussed in the "Emerging Risk” article I mentioned earlier, complexity and fast-moving environments require adaptive systems. Rigid processes and controls can create friction when they need to be changed and then bottlenecks emerge in producing outcomes. Optimal processes become suboptimal very quickly when they are hard to change. Ironically, this is becoming a significant operational risk in managing the risk the actuarial functions exist to service. Adding more people to the process exacerbates the problem while increasing overheads. We are entering a troubling economic period. There will be more uncertainty and less financial stimulus to act. Your team of talented, scarce actuarial resources requires the ability to solve a wide array of problems with lean processes, supported by the right tools with very little frictional cost to learn, execute, and adapt. Providing a glide path for actuarial teams to adopt lean manufacturing principles, in the same way it was done for software engineers, will further accelerate the digital transformation of the risk function. This is the mission that drives my team to deliver value to the market, to help evolve the way actuaries work to manage risk faster and with more confidence.

1 Alistair Cockburn, Agile Software Development: The Cooperative Game (second edition).